Where’s The Audio On Facebook?

Facebook’s F8 developer conference this year introduced us to their 10 year plan which, in short, includes lasers, satellites, bots, and more.

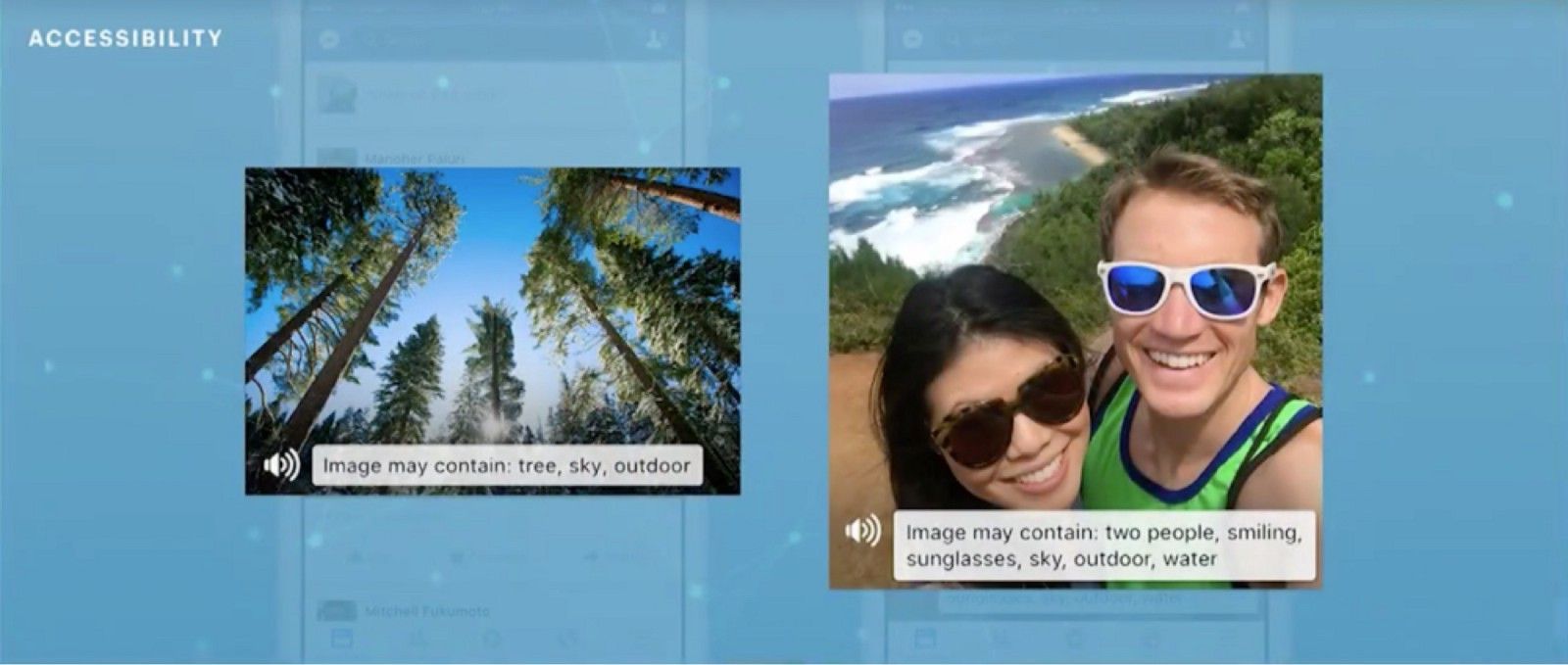

One of the lesser talked about points that Mark Zuckerberg touched on in his keynote was accessibility in the main Facebook product. He highlighted machine learning and AI as a way for visually impaired users to be told the contents of images uploaded to Facebook thanks to image recognition software.

This seems like a neat feature that’ll go some way to helping the visually impaired consume Facebook, though doesn’t go very far in allowing publishing and contributing directly and the obvious medium for doing so is currently lacking from the current tools for status updates – audio.

At F8, Facebook put a large emphasis on live video and video publishing that audio appears to have been bypassed entirely.

Audio is an important medium, not just for accessibility. It’s one of the reasons why podcasts have become such a hot topic, why apps such as Anchor have become popular, and why Soundcloud is the de facto destination for audio sharing on the web.

Imagine a Facebook where you could publish your thoughts in verbal form (not dissimilar to Anchor), rather than a written status, share an infant’s first words with family members, or play your latest musical idea. This is inline with Facebook’s vision of allowing free expression on its platform and in keeping with allowing anyone to share anything there too.

In keeping with the latest trend of live, being able to broadcast live audio on Facebook would undoubtedly be beneficial to podcasts and musicians in better understanding their audiences thanks to Facebook’s trove of user data.